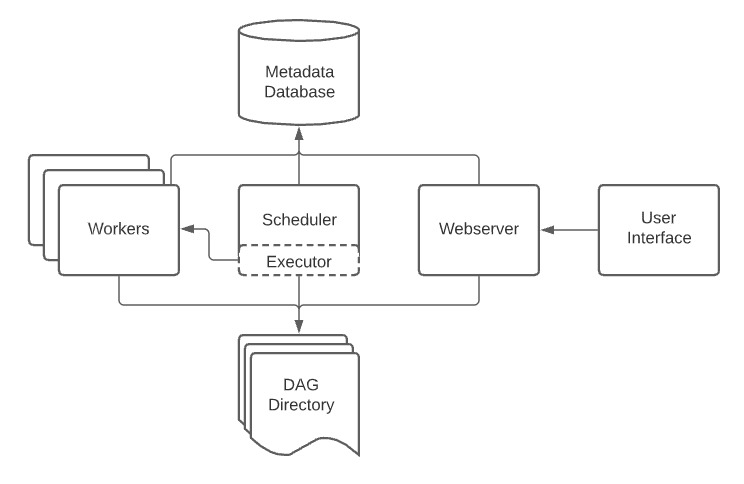

Apache Airflow is an open-source tool. It helps you monitor, schedule, and manage your workflows. Automation can be done with corporate operations such as backups, data warehousing, data testing, and so on. This saves a lot of time and human resources. Furthermore, with a platform like Apache Airflow, scheduling and managing these workflows is a breeze.

Using Airflow, users may create workflows as DAGs (Directed Acyclic Graphs) of jobs. The robust User Interface of Airflow makes it simple to visualize pipelines in production. Also, track progress, and resolve issues. It interacts with numerous data sources. When a job completes or fails it can alert users through email or Slack. Because it is distributed, scalable, and adaptive, it is ideal for orchestrating complicated Business Logic.

Apache Airflow Primary Functions

- Define, schedule, and monitor workflows

- Organize third-party systems to execute tasks

- Provide a web interface for enhanced visibility and management capabilities

Key Features of Airflow

-

Easy to use

Anyone familiar with the Python programming language may simply set up an Apache Airflow Data Pipeline. It enables users to build Machine Learning models. Also manage infrastructure. And send data without limitation to pipeline scope. It also allows users to pick up where they left off. That too without having to restart the entire operation.

-

Modern user interface

The graphical interface of Apache Airflow guides the user through administrative operations. Such as workflow management and user administration. The auto-refresh feature, which makes live monitoring significantly more appealing, is another gem of the graphical user interface.

-

Highly Scalable

Airflow can execute thousands of tasks per day simultaneously. -

Robust Integrations

Airflow provides several operators that are ready to execute the tasks. This is done on Google Cloud Platform, Amazon Web Services, Microsoft Azure, and many other third-party services. As a result, Airflow is simple to deploy to existing infrastructure. And extends to next-generation technology.

- Pure Python

Airflow users can create Data Pipelines leveraging common Python capabilities. Such as data time formats for scheduling. And loops for dynamically generating processes. This gives users as much flexibility as possible when creating Data Pipelines.

Explore Some Alternatives to Apache Airflow

1. JS7 (JobScheduler)

JobScheduler, a workload automation tool enables the automation and integration of corporate processes and workflows. It is used to automatically launch executable files. And shell scripts and run database procedures. All information is stored in a back-end database system by JobScheduler. Cross-platform scheduling for Windows, Linux, and most Unix systems are among the highlights.

class=”wp-block-heading”>Scheduling Remotely

- Jobs and job chains can be processed across JobScheduler. Instances and operating systems via distributed processing.

- Lightweight JobScheduler Agents carry out tasks on behalf of a Master JobScheduler. Agents do not require any setting.

- SSH-based agentless scheduling. SSH executes programs on distant hosts.

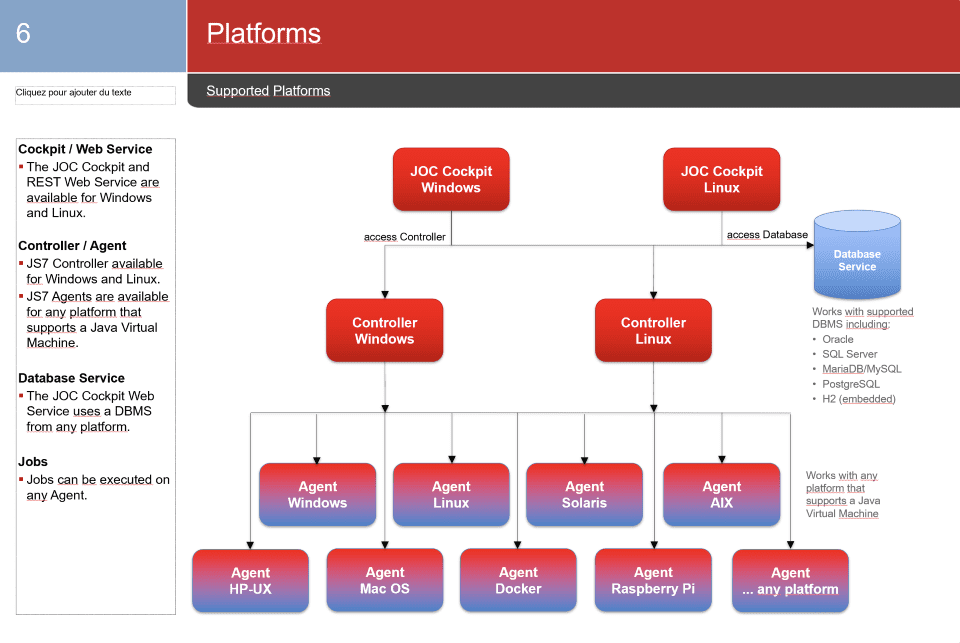

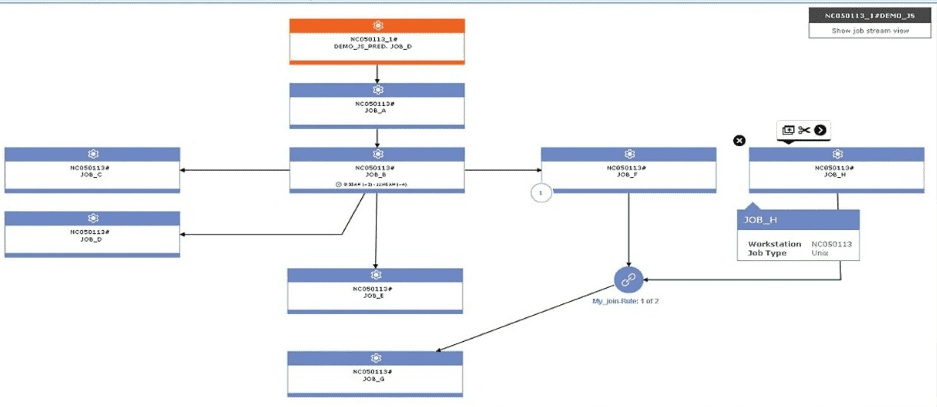

JS7 JobScheduler consists of three components. A Controller (manage configuration, orchestrate Agents), Universal Agent (execute the jobs on any machine), and the user interface JOC Cockpit (monitoring and control in near real-time).

- JOC Cockpit

- Controller

- Agent

JS7 (JOC-Cockpit)

It is a Graphical User Interface. It provides security, real-time information, and responsive design. It manages all the inventory of scheduling.

Primary Functions:

- Automate the execution of executable files, shell scripts, and database procedures.

- Trigger events for job initiation. Include calendar events, monitoring of incoming files, and API events. External programs can trigger them.

Key Features of JS7

- Open Source: JS7 is an open-source platform. GNU General Public License version 3 license it. And a Commercial License for enterprise users. JS7 offers a free online demo for 24 hours. You can create multiple demo accounts to understand JS7 JobScheduler better.

- Platforms and Operating Systems: JobScheduler is compatible with Windows and Linux operating systems. Other operating systems are supported to varying extents. For example, for usage with JobScheduler Agents and Agentless Scheduling.

- Multiple Platform Support: Controller: Unix, Windows Server

- Universal Agent: All Operating Systems that support a Java VM®

- High-availability Cluster: A JobScheduler backup cluster offers fail-safe operation. With automatic fail-over it offers fault tolerance. A fail-safe system has a primary JobScheduler and at least one backup. Both running on separate computers.

- Load Sharing: Using multiple JobSchedulers for a large volume of data will significantly reduce processing time. It will also increase availability. In load-sharing mode, processing activities are shared among multiple JobSchedulers. These handle distributed orders on multiple hosts.

- Unlimited Schedule/Calendars and their Customization: In JS7, we can directly create a Calendar without importing any job. If you wish to exclude some days from your calendar in JS7, you can utilize Excluded Frequencies. This is from the calendar and the Restriction on Schedules.

- Super REST API: JS7 has this feature and can be connected to any application, server, or service.

- Predefined Jobs Library: It some predefined Jobs libraries like (File Transfer and File Watching)

- Different graphical user interfaces: A built-in interface for job control and a graphical user interface. For managing configurations for multiple JobSchedulers on various server platforms.

- Web Services: You can use web services in JS7 to perform orders, workflows, and jobs. As well as cancel, suspend, and resume orders. Furthermore, the PowerShell Module is accessible to access the rest of Web Services API.

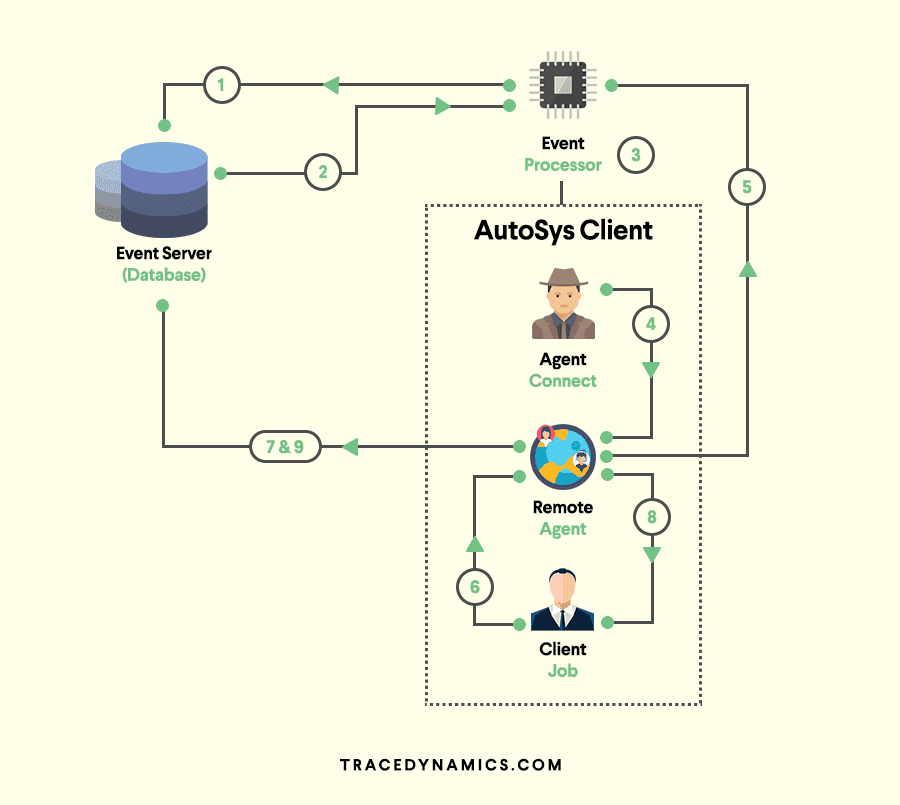

2. Autosys

AutoSys is a job control system that automates scheduling, monitoring, and reporting. These jobs can run on any AutoSys-configured system connected to a network. It can perform various tasks automatically without the need for human involvement. There are several duties such as transferring a file regularly, sending emails with reports. Also importing data from one source to another, and so on. Every task in Autosys can be automated by scheduling the batch file/exe.

Job: It is any task that must be performed in Autosys. A Job can be a single command. Also an executable file, a Windows batch file, or a script. A job with a set of attributes defined for a job to run is known as Job Information Language (JIL).

There are two ways to define/insert a job in Autosys,

- Using the Graphical User Interface (GUI)

- Using the Job Information Language (JIL)

These jobs and their job definitions and JIL are defined on the centralized AutoSys server. The AutoSys server will exchange data with the remote AutoSys agent. Which is installed on a remote machine. Such as Windows/Unix to perform job operations.

Key Features of Autosys

- Database Compatibility: Supports database like H2, Microsoft SQL database, Oracle database, Sybase, and IBM DB2.

- Multiple Platform Support: Supports Windows, IBM iSeries, HP Nonstop, Unix, Solaris. Along with AIX, Linux, HP-UX servers, Unix Sybase SQL server, and Mainframes.

- Super REST API: This capability is available in AutoSys, but it requires more manual configuration.

- Unified Workflow Mapping and Development Environment: Workflow visualization and mapping are available. But there is no centralized monitoring and development.

- Unlimited Schedule/Calendars: In AutoSys, we can create a calendar y using the Import JIL option

- Cloud Support: Although AutoSys supports AWS, Azure, and Google Cloud, it does not support the Docker Platform.

- Scheduling and Execution of ETL: The AutoSys tool allows for the scheduling and execution. This is of ETL tool operations such as datastage ETL.

- Customization of Schedules, Calendars (having user defied dates as calendar frequencies): If you want to execute some jobs in AutoSys while excluding some holidays, you can use the holiday calendar. And add jobs to the exclude list of the holiday calendar.

- Web Services: A web server provides run time environment for running web services. Using web services, you may perform many tasks in AutoSys. Such as retrieving a job status and performing job actions such as start, stop, and cancel.

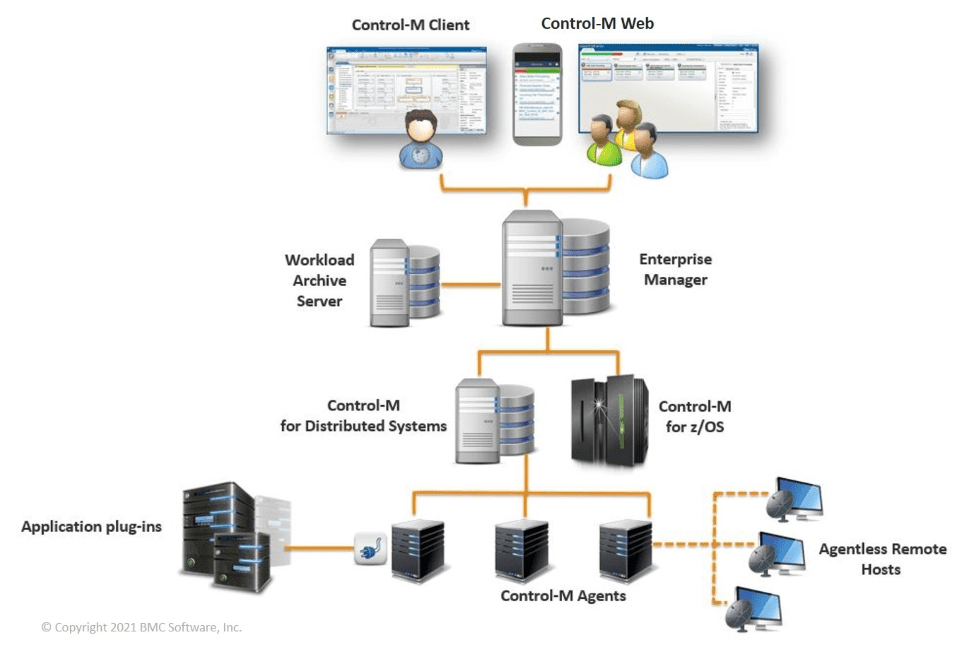

3. Control-M

Control-M makes it easier to manage application and data workflows on-premises or as a service. Simplifies the creation, definition, scheduling, management, and monitoring of production workflows. Ensuring visibility, reliability, and the improvement of SLAs. It integrates with the AWS, Azure, and Google Cloud platforms. Simplifying workflow across hybrid and multi-cloud settings. It will give you with data-driven results more quickly. You will have complete control over your file transfer operations.

Key Features of Control-M

- Simplify and scale data pipelines:

(a) Get a complete picture of your data pipelines. From intake to processing to analytics.

(b) Ingest and process data from Hadoop. Or Spark, EMR, Snowflake, and RedShift platforms.

(c) Resolve SLA problems before they become critical. - Simplify application workflow orchestration across cloud environments:

(a) Orchestration of complicated workflows across hybrid clouds from start to finish

(b) Provisioning on any machine, at any time, including AWS and Azure.

(c) Out-of-the-box support for cloud services. Further, these include AWS Lambda, Step Functions, and Batch. Along with Azure Logic Apps, Functions, and Batch. Also AWS Glue, Azure Data Factory, AWS Databricks, Azure Databricks, Azure Functions. Along with Boomi, GCP Dataflow, GCP Cloud Functions, GCP Dataproc, Informatica Cloud, Power BI, and UI Path.

(d) Utilize the scalability and flexibility of cloud ecosystems. - Easy integration of any application workload:

(a) Controlling when apps are integrated allows you to innovate more quickly.

(b) Improve critical app services by creating job types based on your service requirements. (c) ROI realized—leverage crowd-sourced job kinds from an expert community. - Simplify and automate business application delivery:

(a) Improve service delivery by achieving better insight. And control over the creation of application workflows.

(b) Improve business agility with a scalable solution. This speeds up change requests by up to 80%.

(c) Improve app quality by automating site standard enforcement.

(d) Create workflows with drag-and-drop functionality. This reduce the need for manual scripting.

A place for big ideas.

Reimagine organizational performance while delivering a delightful experience through optimized operations.

4. IBM Workload Automation-Tivoli

IBM Tivoli Workload Automation interfaces with existing data center systems and cloud resources simplify integration. This is done between service management tools and improves day-to-day operations productivity. It also improves operational efficiency. With mobile self-service dashboards and workload management features.

IBM Workload Automation and Containers will be closely integrated. A self-service way of managing jobs and job streams is offered. It allows for enhanced Rerun flexibility. It will enable you to launch and monitor mobile devices and tablet operations. IBM Workload Automation will assist you in managing batch and real-time hybrid workloads. It will optimize workload management. Also improve task management, and simplify operations.

Key features of IBM Workload Automation

- Mobile self-service monitoring and management dashboard. Tivoli Workload Scheduler has dashboards. These allow business users to monitor the health of their workloads. Also to execute simple recovery steps.

- Firewall friendly: IBM Tivoli Workload Automation has been enhanced to allow Dynamic Agents. This is to communicate directly through the Internet and with Network Address Translation. Its done in order to unlock the promise of higher workload delivery (NATs). The new agent-to-broker protocol requires only connections from the agent to the broker. This arrangement also serves proxy servers .

- Self-service automation: Use catalogs and services to submit regular business activities. And perform and monitor processes from a mobile device on demand.

- Anomaly detection in workloads: Use AI-powered historical data analysis. To spot anomalies in the overall workload or in specific jobs.

- Managed file transfer capabilities: With the new Workload Designer, part of the Dynamic Workload Console, you can easily relocate files. And streamline the modeling of automated workflows.

- Easily define workflows: The Workload Designer provides a modeling user interface. That provides a unified access point to design every scheduling object. With improved contextual help and graphical views.

Conclusion

To sum up, workload automation solutions provide a centralized automation hub for scheduling and monitoring operations. Through the workload automation platform, business processes such as project management, ERP, ETL tools, etc., can collaborate. That too seamlessly with minimal human interaction.

The workload automation system should be adaptable and expandable with the business. Another element to consider when selecting a tool is the support provided for the tool. It simplifies the onboarding process while simultaneously increasing the tool’s dependability.

In this article, we looked at the best Apache Airflow alternatives. Further my top recommendation is JS7 (JobScheduler).